A few personal notes and observations in the context of my aims in attending!

Instructional Designers to mediate the locus of control in adaptive eLearning Systems

The goal of Learning Analytics tends to be adaptive modeling. But who validates these? Proposition here is that the tensions between adaptable (HCI) and Adaptive (AI). Instructional Designers can play a role as pattern finders and mediators of adaptation for learning analytics adaptive systems. Should we be building analytics systems to help instructional designers (not students)?

Maybe!

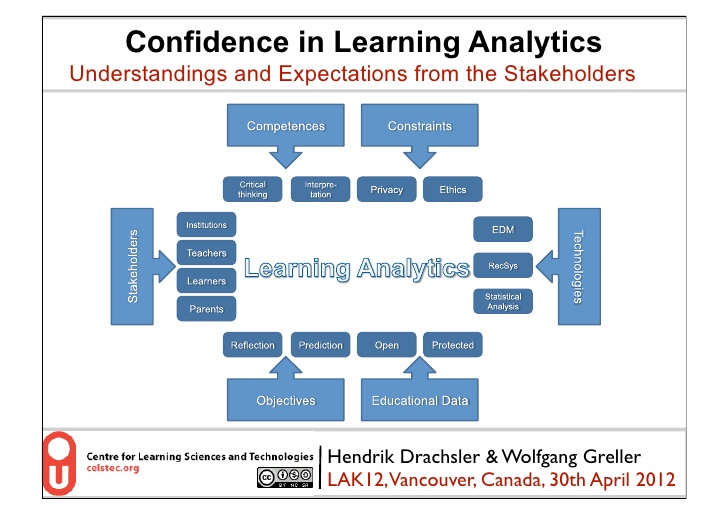

Confidence in Learning Analytics

This session reported on a recent Dutch study by questionnaire (including JISC input apparently).

Presents a nice framework encompassing several of the areas JISC are looking at within the Reconnaissance piece (competencies, constraints, ethics, but for Learning Analytics obviously.

Do Educause have a learning analytics framework I wander? I recall their work on analytics taxonomies. Claims biggest benefit in the student / teacher relationship. Reflection support is seen as key. Anonymisation and willingness to share second and third. Data ownership is key, breches of p[rovacy occur but were not a concern to respondents. Ethics are reported as being seen to be less important.

There seem a lot of limitations in this survey and these were declared. It’s based on very early adopter responses and as such issues are still emerging. It’s good therefore as a snapshot of the state of play at the time but seems quite limited by methodology and scale. There’s an OU snapshot that might be worth contrasting it with.

See the data set

Looking for partners to extend the survey

This is very much a questionnaire research project.

Slides are here

The learning analytics lifecycle; closing the loop effectively

Doug Clow of the Open University. Learning analytics is like cat nip for senior managers says Doug. Sounds very much like Business Intelligence to me which perhaps adds weight to the JISC BI maturity model having Predictive Modeling as the highest level.

Referencing the Gartner hype cycle but reflecting that we should be concerned about the learning, not the senior managers.

References no fewer than 3 frameworks including Kloom, Schon and Laurillards conversational. Control theory and the closed loop control system. Intellectual abstractions abound here. Doug is linking learning analytics to learning theory and suggests we should look to assessment and feedback as both outputs and inputs. All academic though.

Challenges, paradoxes and opportunities for mega open distance learning institutions

It’s another talk by the Open University this time on retention and progression. This too is theoretical academic postulations on analytics application. But based on ‘third space’ where student identities are in flux and being developed / negotiated. A new curriculum based approach to support facilitated by learning analytics but based on their student journey is being developed considering the student pathway recognsiing that their identities change as they proceed. Not a great deal of detail on the practicalities in either of these OU talks. Perhaps they are early on.

Open Academic Analytics Initiative; Mining academic data to improve student retention

Two data sets – demographics and aptitude and event logs to develop a predictive model, an open source early alert system to failure then steps to address. Builds on Sakai, Pentaho BI Suite and using Predictive Modeling Mark Up Language (a standard)

Hope to answer how portable are models?

This is to be launched as the Sakai Early Alert System

Interesting on one level in that it’s an analytics module for a ‘best of breed’ product being Sakai.

This talk would be worth revisiting as it has some quantified evidence of impact.

I had a brief conversation about a tendency here to mix the term student with students and a concern that rends in students might not be so accurately applied to an individual person.

Also that grades and effort are accurate predictors of retention and progression. Nothing actually new there then!