Earlier this year I was fortunate enough to attend Learning Analytics and Knowledge Conference where SOLAR was launched ‘the Society for Learner Analytics Research‘. The aim is to enhance interdisciplinary discussions to support the emerging field of analytics and today I’m at the inaugral meeting of the UK branch at the OU, Milton Keynes.

The tag to follow is #flareuk and I notice Doug Clow is here live blogging, so well worth a look there as well as here.

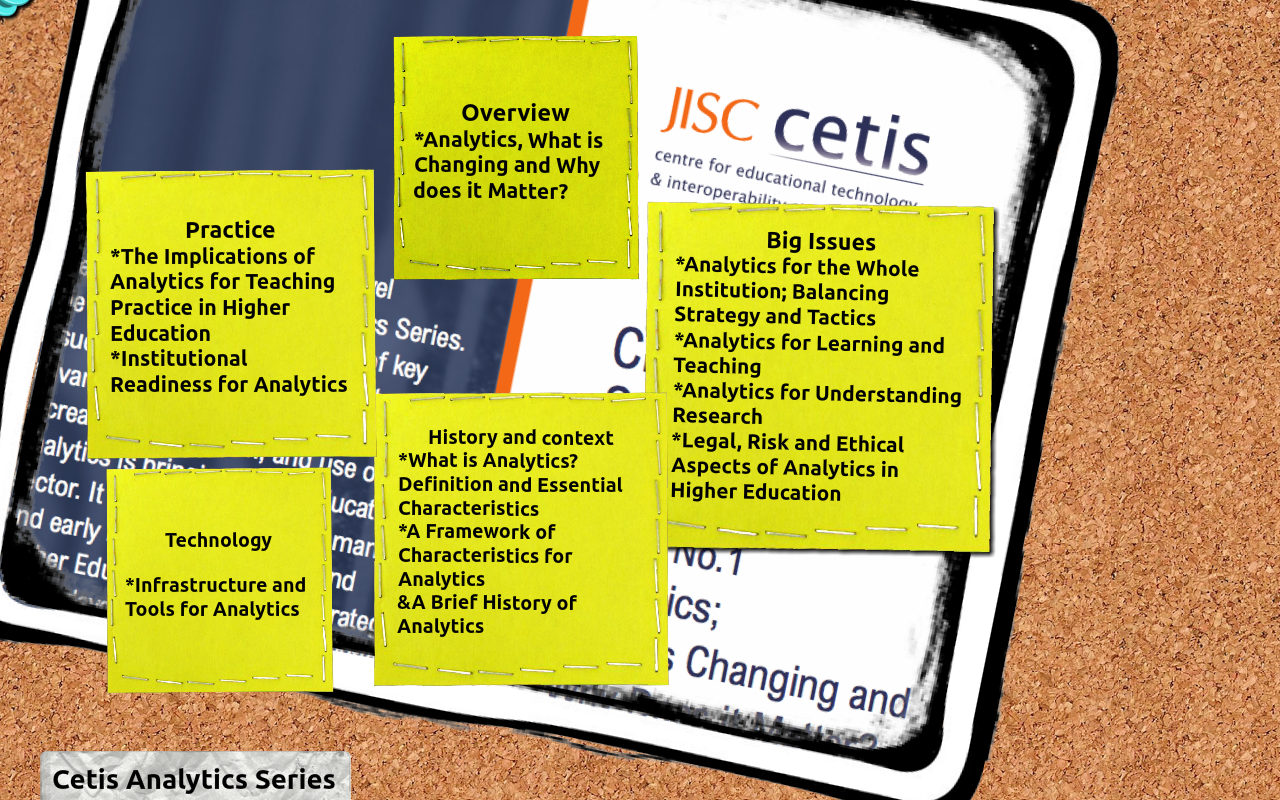

We ran a series of Lightning talks and first up was Sheila McNeil talking about the JISC Analytics Series.

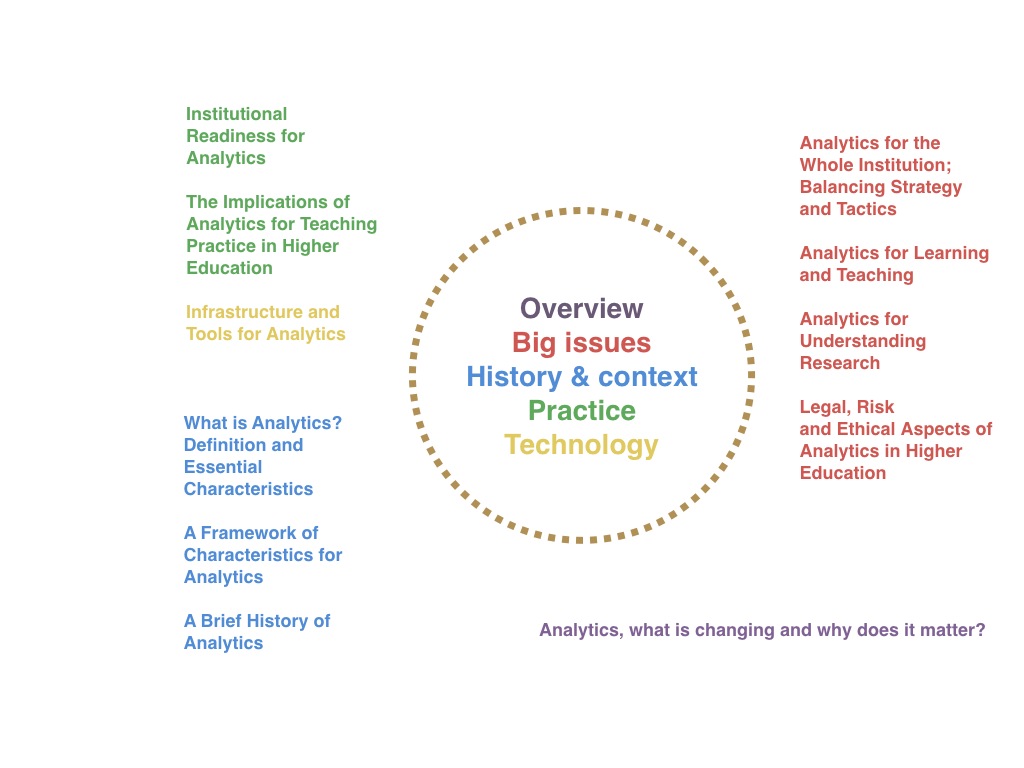

This is an initiative I have been involved in and has produced a series of 11 briefing papers on the various aspects of Analytics for Tertiary Education. We’ve already funded initiatives in RM, BI and Activity Data and the latest piece aims to give an overview and to help senior managers get an over view of what’s is happening.

First 4 papers are the detailed core ones and in red. Whole institution is out on Wednesday. Learning and Teaching, Research, Legal Risk andf ethical – as one might expect the law is somewhat behind the technology in terms of gathering data about people and what you do with it. Adam Cooper has written the blue ones. The green institutional readiness paper are the case studies, one from OU, one from Bolton. Implications looks at practical aspects and quick wins. Infrastructure does what you’d expect from the title. They’re coming out one or two a week until mid January. The papers will be hosted here, while the timetable is here.

The papers don’t answer all the questions, they intend to provoke more questions, inform the debate, inform JISC and other funders, identify areas for collaboration and sharing, come to common understanding regarding the jargon / language and move things forward nationally and perhaps internationally via Educause who have had 2012 as their analytics theme, SURF and suppliers / vendors.

Next up you’ll need to refer to Doug as I missed them while typing the above!

Doug talked about Data Wrangling examining how an analysis of corporate data for predictions highlights issues with the data itself, capture, tools, skills and what questions might be asked of analytics in future. At present the analytics consumers are faculty but the aim is to include students and the 7000 associate lecturers who deliver the teaching. There’s a link to the Key Information Sets.

Adam Cooper introduced a personal analytics experiment looking at Twitter followers of team members an approach to analyse social network usage using Exponenital Random Graph models and noting mutuality, transitivity andHomophily.

Next up John Doove of SURF fresh in from Educause and the SURF Education days event last week.

SURF is a JISC like organisation issuing funding to HE/FEI’s to examine issues by project methodology.

The SURF projects analysed data on students and their environment to impsrove education. There’s a 7 minute video with a minute form each project available (in Dutch with English subtitles) here. John identified

The eBeam project came up next. Thanks to @sclater for the summary; students are measurably more likely to improve performance if they are shown analytics relating to their assessments

Social Learning Analytics Discourse from Rebecca Ferguson at the OU. This work was aired at LAK 2012 and Rebecca updated us on the work outlining analysing the interesting aspects of asynchronous learner conversations via a self training framework for automatic exploratory discourse detection.

Dai Griffiths proposes that Learning analytics supplies models; what data we collect, how our models of education are changing. Models are based on Customer Relationship Management. How we deal with customers, what are the lessons. How can LA influence the managerial aspects of education and the teaching. How can analytics support that practice and what are the dangers if we don’t pay attention to those. A favourite example of where LA is enforcing the wrong paradigm when Dai spoke to a VP stating ‘we are learner centred, so our analytics is tied to learner grades’. Dai felt that this misses huge opportunities by looking at the outputs rather than the interactions leading to them. Dai has written a paper in the JISC Analytics Series.

Martin Hawksey talked about MOOC architecture. The scaled up LMS vs the Constructivist where student are using their own spaces (blogs, social networks) to support their learning and mentioned work by George Siemens and Downes. Martin is interested in using Google spreadsheets to analyse data from open sources into a central space to examine issues and answers such as;

Time limited, Analytically cloaked, Dark social, Infrastructure / Messy Data

Openish data was discussed with an example of the open APIs of Twitter being bound to agreements not to store that data in the cloud.

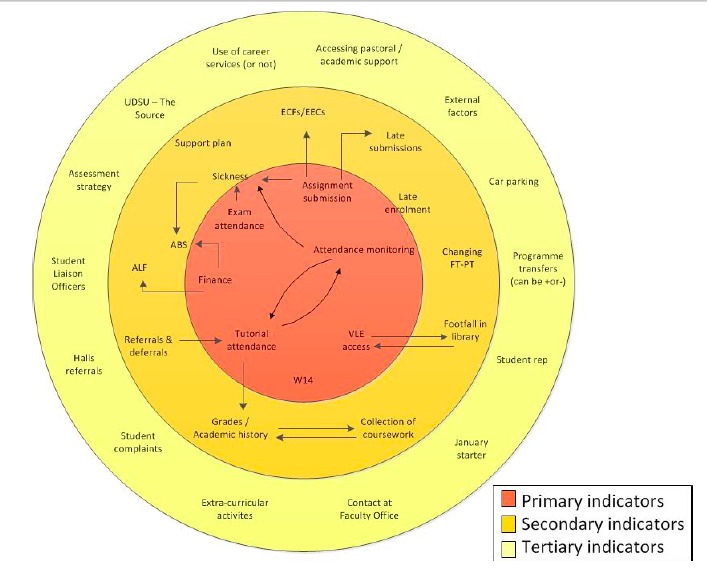

Jean Mutton next of Derby, an old colleague via JISC funded Relationship Management Programmes (due to deliver a how to resource in December 2012). Jean discussed the identification of ayt risk students so promoting completion rates. Derby found 7 different corporate systems hold the data needed with regard to student interactions with the University and evidence of students beginning to fall away. Grades are too late. Staff often have ‘hunches’. The information staff need to have the conversation with students about what really matters to them (what are the issues that make a great student experience) and Derby have published these in their JISC funded Case Study coining the term ‘Engagement Analytics’.

Mark Stubbs of Manchester Met aired the identification of patterns in a data warehouse to help improve recruitment issues – can we predict why so many students failed to turn up following a course offer. Young male students who leave course interactions to the last minute fail and feeding that data back to students could be very powerful. Manchester Met are starting a major analytics project reflecting their belief that they are sat on vast data to help improve things. There are things that are taken for granted that the data flags as not being well addressed.

Annika Wolf talked about her JISC funded project called Retain Analytics from my own portfolio. The aim was to model and visualise via dashboard risk factors and impact on student performance to lecturers and module managers. The system was trained on historic data. VLE data gave the most interesting data but this was enhanced when linked with assessment data. Again this one is available as a JISC Case study

Chris Ballard of Tribal is working with a University to create a predictive model to predict student success defining what that means. By combining data from multiple admin and activity sources (library, VLE etc), how staff can correctly interpret the predictions bringing together visualisations AND Action. The latter is often lacking.

We broke into themed groups. Here are the findings of the Data group, while Doug blogged the retention group I participated in.

I chatted with Simon Buckingham Shum who drew my attention to his UNESCO policy briefing on Learning Analytics – jargon free apparently so ideal for your senior manager audience.

In Summary

The field of Learning Analytics is young. In terms of the Gartner hype cycle it may be at the pinnacle. Key seem to be defining the questions. Start small and keep it simple. Recognise the potential, but be realistic. The predictions may never be 100% reliable. Interpretation is key. Analytics allows the educator and learner access to the information that has previously been the domain of the researcher. Data literacy and visualisations are therefore key. The context to act on the new information analytics provides is key. Legal and ethics are key but JISC have that covered with a forthcoming report in our Analytics Series. Nice to meet so many analytics inspired folks and I look forward to seeing developments and hope to play a part in the future.

Thanks Myles

Great round up of the session. Have linked to this blog and Doug’s in a summary of the day ttp://blogs.cetis.ac.uk/sheilamacneill/2012/11/20/quick-links-from-solar-flare-meeting/

Sheila